Published April 9, 2026 · Updated April 10, 2026 · 12 min read

HappyHorse-1.0 Revealed: Alibaba's #1 Open-Source AI Video Model

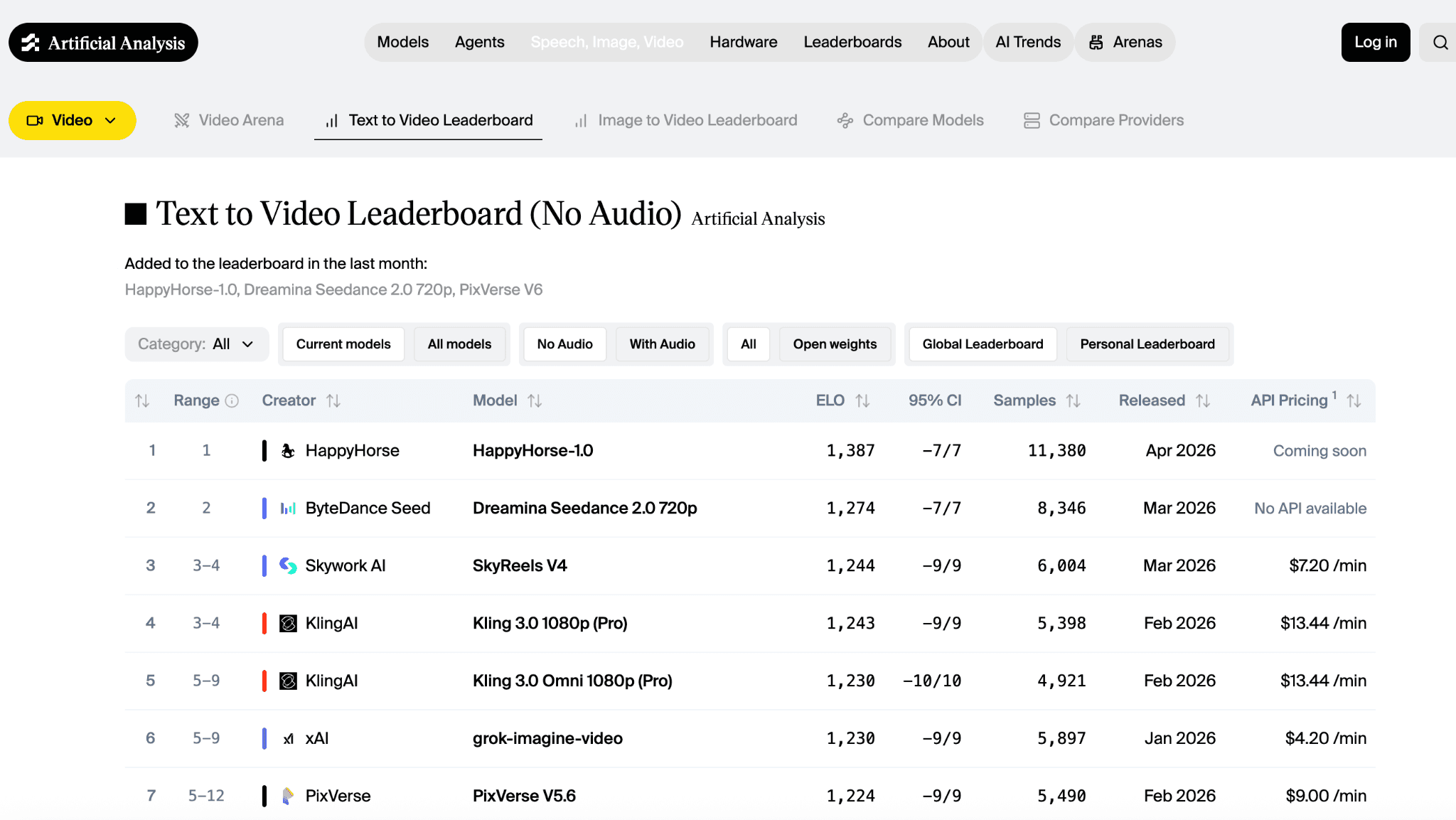

The model that arrived anonymously and immediately swept the Artificial Analysis blind leaderboards is no longer a mystery. As of April 10, 2026, HappyHorse-1.0 has been confirmed as the work of Alibaba's Taotian Group Future Life Lab — and it is still sitting at #1 above Sora, Veo, Seedance, Kling, and PixVerse.

BREAKING · Updated April 10, 2026

Alibaba has now publicly confirmed that HappyHorse-1.0 was built by the Future Life Lab inside its Taotian Group, led by Zhang Di — the former Kuaishou VP who previously ran the Kling AI video team. The model is a 15-billion-parameter unified single-stream transformer with native synchronized audio-video generation, seven-language lip sync, and native 1080p output. It remains #1 on both the text-to-video and image-to-video Artificial Analysis leaderboards. Coverage below from Bloomberg, The Information, CNBC, and Sherwood News.

A new AI video model called HappyHorse-1.0 arrived with the kind of entrance that makes the rest of the market look as if someone turned on the lights mid-performance. It did not first appear through a polished launch event, a splashy research paper, or a carefully staged product rollout. Instead, it appeared in the Artificial Analysis Video Arena and rapidly rose to the top of key blind-comparison leaderboards, where users vote on outputs without knowing which model produced them (Artificial Analysis, 2026a, 2026b).

That unusual entrance triggered five big questions across the AI video world. What exactly is HappyHorse-1.0? Who made it? Is it really open source? Why did it become popular so quickly? And how does it compare with major competitors such as Seedance, Kling, and PixVerse? As of April 10, 2026, most of those answers are now on record. The authorship question has been publicly resolved — HappyHorse-1.0 is Alibaba's Taotian Group, built by its Future Life Lab — while the open-source release and the commercial product stack around it are still catching up to the model's benchmark results (Bloomberg, 2026; Osawa, 2026; Sherwood News, 2026).

What is HappyHorse-1.0?

HappyHorse-1.0 is a next-generation AI video generation model that has already performed exceptionally well in blind human-preference evaluations. On the Artificial Analysis leaderboards, it leads both the text-to-video leaderboard and the image-to-video leaderboard. Artificial Analysis explains that these rankings are based on Elo scores derived from blind user votes, meaning users compare two model outputs without knowing which model made which video (Artificial Analysis, 2026a, 2026b).

That matters because AI video can be a carnival of carefully selected demos. A model may look brilliant in one hand-picked example and stumble badly in everyday use. Blind comparison is not perfect, but it is much harder to game than a self-reported benchmark. In that environment, HappyHorse-1.0 is not merely visible. It is winning. As of April 10, 2026, Artificial Analysis lists HappyHorse-1.0 at the top of text-to-video with an Elo around 1,388, and at the top of image-to-video with an Elo around 1,413 — the only model holding #1 on both tracks simultaneously (Artificial Analysis, 2026a, 2026b).

On the technical side, HappyHorse-1.0 is described by its creators as a 15-billion-parameter unified single-stream transformer that jointly generates video and audio from a single prompt. It supports both text-to-video and image-to-video, produces native 1080p output, and handles synchronized lip sync across seven languages (Bloomberg, 2026; Sherwood News, 2026).

Who made HappyHorse-1.0?

For about a week, the authorship of HappyHorse-1.0 was treated publicly as a mystery. Reports and commentary around its sudden rise consistently described it as anonymous or pseudonymous, and that ambiguity became part of the model's mystique almost immediately.

That mystery is now over. On April 10, 2026, Bloomberg, CNBC, Sherwood News, and The Information all independently reported that HappyHorse-1.0 was built by Alibaba — specifically, by the Future Life Lab inside its Taotian Group, the e-commerce arm of Alibaba. The lab is led by Zhang Di, a former Vice President at Kuaishou who previously ran the Kling AI video team. In other words, a person who has already shipped one of the most recognized AI video systems in the world decided his next act would be launching an even better one — quietly — from inside Alibaba (Bloomberg, 2026; Osawa, 2026; Sherwood News, 2026).

This is important context. It means HappyHorse-1.0 is not a hobby lab project or a stealth academic release. It is a frontier model with one of the most experienced AI video leaders in the world behind it, now backed by one of the largest technology companies in Asia. The anonymity at launch appears to have been a deliberate positioning choice: let the outputs do the talking on a blind leaderboard before anyone knew which logo was attached (Bloomberg, 2026; Sherwood News, 2026).

Is HappyHorse-1.0 really open source?

Here the answer is mostly yes, with a caveat on timing.

The Hugging Face model page for HappyHorse-1.0 uses an Apache-2.0 license label and explicitly brands the project as an open video model. Alibaba's team has also publicly stated that the model will be fully open sourced, with model weights and a GitHub repository coming soon (happyhorseai, n.d.; Sherwood News, 2026).

The caveat is that as of April 10, 2026, the practical release is not yet complete. The Hugging Face page does not read like a mature, battle-tested open release that has already become widely deployed across the ecosystem. Inference providers are still ramping up, Artificial Analysis lists the model's API pricing as "coming soon," and the GitHub repository still advertises weights as pending. That is why, for most creators, the fastest way to generate HappyHorse-class video today is to use a hosted platform that already wires into leading video models — Happy Horse AI is one of the few that is live and ready to use without a waitlist (Artificial Analysis, 2026a, 2026b; happyhorseai, n.d.).

Why did HappyHorse-1.0 suddenly become so popular?

HappyHorse-1.0 became popular because it hit the market through the one door that always creates chatter: visible performance. It did not rely on reputation first. It relied on outcomes first. When a previously unclear entrant appears on a respected blind-comparison leaderboard and outranks familiar names, people start paying attention fast (Artificial Analysis, 2026a, 2026b).

The model's rankings explain most of the sudden heat. On Artificial Analysis, HappyHorse-1.0 currently leads text-to-video ahead of Dreamina Seedance 2.0, Kling 3.0 Pro, and Veo 3.1. It also leads image-to-video ahead of Seedance 2.0, PixVerse V6, and Kling 3.0 Omni. The model did not simply arrive and dominate every category equally. It arrived and seized the most talked-about categories while remaining competitive across the rest (Artificial Analysis, 2026a, 2026b).

There was also a second force behind the buzz: mystery. For the first week, HappyHorse-1.0 was a pseudonymous product, and pseudonymous products create a natural narrative engine. A named product launch answers questions. A shadowy one manufactures them. When Alibaba finally confirmed authorship on April 10, that curiosity converted directly into a second wave of global coverage — the kind of two-act rollout that most launches cannot stage even on purpose (Bloomberg, 2026; Sherwood News, 2026).

How does HappyHorse-1.0 compare with Seedance, Kling, and PixVerse?

The fairest answer is that HappyHorse-1.0 looks strongest right now in blind preference on core leaderboards, but its rivals still have advantages in product maturity, workflow completeness, and commercial readiness today.

Compared with Seedance

Seedance 2.0 remains one of the most formidable competitors in the field. ByteDance describes it as a unified multimodal audio-video generation architecture that supports text, image, audio, and video inputs. The company highlights motion stability, audio-video joint generation, and director-level control with reference inputs. In short, Seedance presents itself as not just a model but a highly developed creative system (ByteDance Seed, 2026).

On the leaderboards, however, HappyHorse-1.0 currently outranks Dreamina Seedance 2.0 in both text-to-video and image-to-video. Seedance still leads the audio-enabled image-to-video category by a narrow margin. This paints an interesting picture: HappyHorse is leading in the most visible quality contests, while Seedance still demonstrates strength in multimodal audio-video integration and overall product maturity (Artificial Analysis, 2026a, 2026b; ByteDance Seed, 2026).

Compared with Kling

Kling 3.0 Omni is a more mature and explicit product experience. Its official guide describes an all-in-one multimodal system with native audio, multi-shot generation, reference-image and reference-video control, and support for up to 15-second videos. Kling also clearly documents pricing and output modes, including both 1080p and 720p options (Kling AI, 2026).

There is a poetic angle to the comparison: the person who originally built Kling is the same person now behind HappyHorse-1.0. Zhang Di ran the Kling team at Kuaishou before moving to Alibaba's Taotian Group to build Future Life Lab. On Artificial Analysis, HappyHorse-1.0 now ranks above Kling's flagship 3.0 variants — which means the architect of Kling has effectively shipped a successor that beats his own previous work on blind preference (Artificial Analysis, 2026a, 2026b; Bloomberg, 2026; Kling AI, 2026).

Compared with PixVerse

PixVerse V6 occupies yet another lane. PixVerse says V6 improves camera work, character performance, and multi-shot generation with native audio, and it frames the release as useful for both creative and commercial workflows. Its launch materials emphasize stronger continuity, better physical realism, and the ability to generate multi-shot short films with native audio from a single prompt (PixVerse, 2026).

On the Artificial Analysis image-to-video leaderboard, PixVerse V6 currently ranks below HappyHorse-1.0 and Seedance 2.0 but still holds a strong position near the top. For a team that values workflow depth and commercial packaging, PixVerse may still be the more practical choice today, even if HappyHorse is the model drawing the loudest gasps from the balcony (Artificial Analysis, 2026b; PixVerse, 2026).

So who is actually stronger?

If "stronger" means current blind user preference on the major Artificial Analysis tracks, HappyHorse-1.0 has the edge right now. If "stronger" means the overall package of product readiness, polished user experience, and documented workflow controls, then Seedance, Kling, and PixVerse each still have serious claims of their own. The market is not facing a simple overthrow. It is watching a new contender force everyone to look twice at the leaderboard and then three times at the product stack (Artificial Analysis, 2026a, 2026b; ByteDance Seed, 2026; Kling AI, 2026; PixVerse, 2026).

What should creators and builders do with this information?

The sensible response is neither breathless worship nor cynical dismissal.

For creators, HappyHorse-1.0 is worth watching because blind users consistently prefer its outputs in head-to-head comparisons. That is a real signal, not decorative wallpaper. But until the Apache-2.0 weights and official API fully ship, the practical move is to generate with a hosted platform that already offers HappyHorse-class quality through leading video models. That is exactly what Happy Horse AI provides — text-to-video and image-to-video, no waitlist, with paid plans for active generation.

For builders and product teams, the more interesting question is what happens when HappyHorse-1.0's weights actually land. If Alibaba follows through on a clean open release, the competitive map of AI video shifts again — this time in favor of any product team fast enough to integrate the new model into a real workflow ahead of its rivals (Artificial Analysis, 2026a, 2026b; Bloomberg, 2026; happyhorseai, n.d.).

Final thoughts

HappyHorse-1.0 matters because it has already done the hardest part of any new model launch: it made people care before it fully explained itself. It did that by winning visible blind-comparison contests against serious competitors. That alone would have made it notable. The anonymous launch, the Alibaba reveal, and the open-source promise only made the story more combustible (Artificial Analysis, 2026a, 2026b; Bloomberg, 2026; Sherwood News, 2026).

As of April 10, 2026, the most accurate summary is this: HappyHorse-1.0 is a genuinely significant AI video model whose leaderboard performance is real and impressive; it was built by Alibaba's Taotian Group Future Life Lab under Zhang Di; and its open-source identity is credible and loudly claimed, but not yet fully delivered in the form most users will eventually want (Artificial Analysis, 2026a, 2026b; Bloomberg, 2026; happyhorseai, n.d.; Sherwood News, 2026).

In other words, HappyHorse is not just a new model. It is a question mark that learned how to sprint — and has now taken off its mask.

References

- Artificial Analysis. (2026a). Text to video leaderboard – Top AI video models.

- Artificial Analysis. (2026b). Image to video leaderboard – Top AI video models.

- Bloomberg. (2026, April 10). Stealth Alibaba video AI model tops global ranking on debut.

- ByteDance Seed. (2026). Seedance 2.0.

- happyhorseai. (n.d.). Happy Horse AI Video Generator.

- Kling AI. (2026, February 6). Kling Video 3.0 Omni model user guide.

- Osawa, J. (2026, April 9). Alibaba anonymously launches new AI video model. The Information.

- PixVerse. (2026). PixVerse V6.

- Sherwood News. (2026, April 10). Creator of mysterious "HappyHorse" text-to-video model is Alibaba.

Generate HappyHorse-class video free

Text-to-video and image-to-video from the leading AI video models, in one place. Paid plans unlock text-to-video and image-to-video instantly. No waitlist.

Paid plans · No waitlist · First draft in 30 seconds